Privacy-Preserving Wearable Camera Setup for Dietary Event Spotting

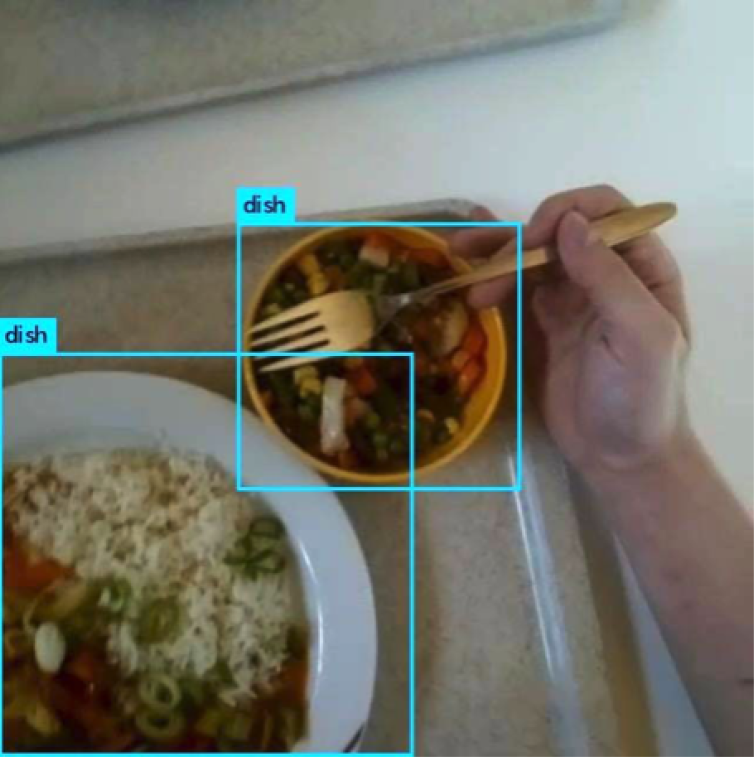

To provide high-quality dietary assessment of free-living behaviour is the first step for implementing any effective diet coaching and weight management program. A continuous direct human observation is an expensive and non-scalable solution. Exploiting an unobtrusive wearable camera-based solution to monitor dietary activities and to provide relevant information from free-living setting may help to understand intake, compensate underestimated food recall from self-reports and improve accuracy of dietary assessment. The images of participant’s dietary activities can subsequently get manually analysed and annotated to extract knowledge. We used a wearable head-mounted egocentric camera setup for dietary event spotting in free-living.

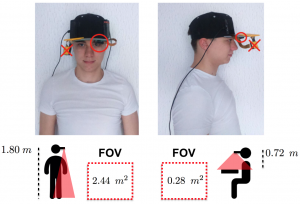

Wearable Setup

EgoCap setup. At the bottom, the field of view (FOV) of the camera is depicted.

Our egocentric camera system is composed of a Raspberry Pi Zeros combined with a camera module attached to a standard cap, all powered by a portable power bank with a capacity of 26,8Ah (ANKER, Model A1210). The power bank was positioned in a pants back pocket or in a backpack, depending on preference. Fixing the camera on the cap, pointing downwards, allowed us to reach an acceptable trade-off between privacy infringement and information retrieval. With our configuration it is really unlikely, that other people could be filmed by the camera, in fact reducing privacy-infringing content captured in the videos.

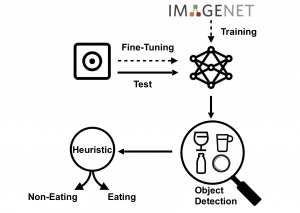

Ego-Vision-based Automatic Dietary Event Spotting

Our spotting framework.

Our assumption is that a dietary event is characterised by the usage of dietary objects. We implemented an event spotting algorithm based on identification of dietary objects in the video sequence. In order to detect dietary objects, we employed deep convolutional neural networks (CNN), trained using the transfer learning paradigm. Once dietary objects were identified in the video frames, an heuristic associated frames to eating or non-eating events.

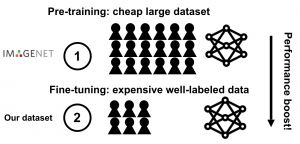

Transfer Learning

Transfer learning paradigm.

We used the Darknet framework which implements an easily adjustable platform for training and evaluation of network-designs on the dataset of choice. As network structure, we employed Yolo9000, a network that learns to predict and classify objects in images, while still maintaining high speed and performance. The Yolo9000 structure simplifies the detection by presenting a new way of predicting the object borders which produces less output and thus makes further classification of the object proposals faster. We pretrained the network on a very large dataset, i.e., ImageNet, which contains 1.2 million images with 1000 categories, and then we used the same network as an initialisation for the training with our dataset. We followed the so-called fine-tuning approach, that implies not only replacing and retrain the classifier on top of the network, but also fine-tune the weights of the pretrained network by continuing the backpropagation.

Object Detection

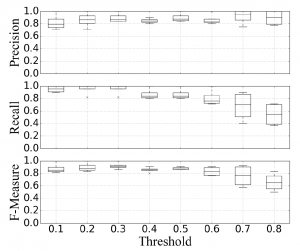

Retrieval performance of dietary object detection.

When the threshold was varied from 0.1 to 0.8, average precision increased from a minimum of 78% to 98% , average recall decreased from of 100% to 55% , and average f-measure decreases from of 90% to 64% . Precision, recall and F-measure of dietary object detection and mAP are shown in Fig. 5. An overall mAP value of 51% reached. The individual object detection performances, by tuning threshold values, have a similar trend. One exception is the bottle class that reached the highest precision and exhibited the lowest recall.

Heuristic for Dietary Event Spotting

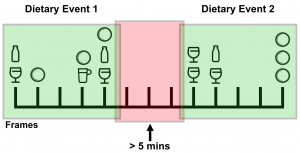

Temporal heuristic.

A simple heuristic was chosen to characterise a true positive. If three or more consecutive frames contained true positives, a dietary event was considered to be spotted. The short duration, i.e., three seconds, was chosen in order to not miss the shortest dietary events as sip of water or single food intake. We compared class labels between ground truth and detected instances. Dietary events are not isolated events, and, commonly, other activities are carried out in the meantime, e.g., socialising or working at the desk. To assign practical meaning to activity segments, we considered gaps of less than five minutes as temporary interruption of an on going dietary event.

Retrieval performance of dietary event spotting.

Sample Video

Publications

[publication id=”ZM4RFTR9″]

Contact

Dr. Giovanni Schiboni

- Job title: Researcher

- Phone number: +49 9131 85-23604

- Email: giovanni.schiboni@fau.de